wiiHack – Montreal

First notes from the wiimote weekend at Hexagram (Montreal).

Spent yesterday compiling a list of tactics for accessing data. Will post full results as today unfolds….

Connecting a chuck to an Arduino

The solution I am working on is based upon code and examples from:

Code:

TodBot — code example and developer of the physical adaptor I am using. This is a great resource — so you may want to start there if you are stuck

Hardware

the adapter I am using can be found at sparkfun

High Level Map

Chuck speak I2C — a form of serial communication. You need 2 libraries to make this code work. The first is wire.h — which ships with Arduino. The second is some form of the wiiChuck libraries that others are developing. I have pulled two pieces of code from the above sites to create my solution.

There is nothing particularly novel about this stage — I just moved the power code block from Todbot over to the library from growdown. The samples I found seemed to have this missing.

I am cleaning code but this bundle is working for me. Set your serial monitor to 19200 to see the data in the Arduino environment.

Vodpod videos no longer available.

Camille Utterback — Kodak Lecture

On Friday, May 1 at 7:30pm., the New Media option at the School of Image Arts (Ryerson University) in association with the Kodak Lecture Series will be hosting a talk by interactive installation artist Camille Utterback.

Camille Utterback is an internationally acclaimed artist whose

interactive installations engage participants in a dynamic process of

kinesthetic discovery and play. Utterback’s work explores the

aesthetic and experimental possibilities of linking computational

systems to human movement and gesture. By creating installations that

use video tracking software to respond to a users body, she creates a

visceral connection between the real and the virtual. Her work focuses

attention on the continued relevance and richness of the body in our

increasingly mediated world.

WHEN: Friday May 1, 2009, 7:30pm

WHERE: Library Building, Room 72 (350 Victoria Street at Gould Street)

FREE: Arrive early for guaranteed seating

Visit www.ryersonlectures.ca

Building a Simple Network

Network building will be introduced this week.

To complete this exercise, you will need to be able to find your machine’s IP. If you are unsure how to do that on a lab computer, check here.

Using the Server:

The server broadcasts sensor values to all connected clients. This is a simple starting point for building distributed haptic experiences. This code could be used in other contexts as well.

Start by setting up the Arduino and Sensor. Upload Arduino code — simple_SensorForServer.pde and connect and analog sensor (I am using a 1k pot) to analog input pin 0 (zero). Next connect an Led to PWM pin 11. Adjusting the sensor should alter the brightness of the LED.

Next, start the server by running the Processing sketch entitled simple_teleServer.pde. Sensor data should be picked up immediately. Adjust the sensor again — a rectangle in the upper right of the server window should change colors.

Now, connect some clients.

The client (simple_teleClient.pde) is slightly easier than the server to set-up. All you need to do is set the server’s IP inside the client sketch and launch it.

To do this, find the IP of the machine upon which you are running the server (see above if you are unsure about how to do this — it is for Tiger not Leopard — but should be clear enough). Once you have the IP, you have to make a change in the client sketch. The original line will look something like this:

teleClient = new Client(this,”127.0.0.1″,12345);

Change the “127.0.0.1” to your Server’s IP (put the IP in quotes).

Now, launch the client. If you connect, the server will display you on its screen.

You should also try this with an Arduino on the client side. To do this you will need to set up an LED as you did above. Again, nothing facny — just insert it directly onto your Arduino. Flat side to ground, other leg to PIN 11.

Upload the code simple_valueOutput.pde to your client Arduino. This code receives data from the client and converts it into LED brightness.

You should be able to control both LEDs from the single sensor.

Communicating with Xbees

This is a quick post — will clarify ASAP.

This post will cover a set up for communicating between two Arduinos with Xbee radios. it is not the only solution but it is a start. I will also describe how to organize a piece that enables a remote Arduino to communicates with processing via xBees.

I am assuming that you have configured your radios to talk to each other.

Connections for Serial Communication

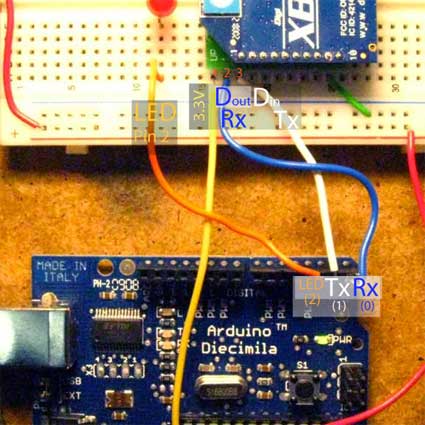

When communicating between Arduinos with Xbees we need a different circuit than we used to configure the radio. If you removed your Arduino chip to configure the radios you will need to replace it for the following to work. Make sure you put the chip in the right way — notched end goes towards the edge of the board (I think that makes sense looking at the board, let me know).

The circuit is simple. Power the xBee with 3.3V from the Arduino. Next, for serial communication you need to connect two wires. Connect Arduino RX (PIN 0) to xBee TX (labeled Dout on the xBee breakout board). Connect Arduino Tx (PIN 1) to xBee Rx (labeled Din on the xBee breakout board). If you have your xBee and Arduino facing the same ways as I do, your communication wires will cross over each other. That is what you want. (NOTE: this is different that the wiring used to configure the XBees).

A NOTE ON CODING

Important: You will need to disconnect one end of the serial communication wires you just connected EACH time you change the code in your Arduino. Once the code uploads you can RECONNECT the serial communication wires.

The Whole System

Here is an example that uses xBee radios as a communication bridge between two Arduinos. You will need:

- 2 xBee radios

- 2 Arduinos

- 2 LEDs

- 1 button (switch)

- wires, and some 1k and 10k resistors.

- sample CODE.

The system requires the sending radio to have the serial communication connections above as well as a digital switch (button) connected to PIN 2 and an LED connected to PIN 3. The receiving side has an xBee connected as above and an LED connected to the receiving Arduino on PIN 2.

When working this system will sense button presses, turn on the local LED and send a message via xBees to the remote Arduino. The remote Arduino receives the message and reflects the button state with its LED.

This is a one way system. I will post a two-way system later today.

Ya, But I want to Talk to Processing

If you want to talk to processing from a remote arduino then you have two choices. Once use the system above — and receive serial from an arduino connected to the computer.

OR

Set up a slightly different system. I think this is an easier solution — but you may disagree. Use xBees to replace the cable you are using for communication. (NOTE: you will still need the cable to program your arduino — this is for once you have code in place. Using radios to code is another story).

The system should be developed and debugged with the programming cable first with radios introduced at the end. So I will assume you have some code that allows your arduino to talk to processing already — maybe a sensor reading or similar.

Once that is working, remove the programming cable and connect an xBee to your Arduino as above in THIS post. At the computer side where processing is running connect an xBee as if you were about to configure it (ie with a FTDI breakout or a dechipped Arduino).

If you tell processing to look at the port where you just connected an xBee you should be receiving data — just as you were with the cable.

If I need more HELP?!

As always this is coved in Tom Igoe’s Making Things Talk.

Documentation Requirements

Documentation Requirements:

Online Web Page:

Your documentation should be presented online as a part of your growing webpage / portfolio. So text, image and video should be embedded together in one place.

Feel free to use Vimeo or YouTube to create embedded videos for your web page. More visibility is always a good thing. However, do make sure you keep a high-quality copy of your video documentation for your archives (useful for presentations, off-line viewing, submissions, etc). We would appreciate if you tagged your videos with “Ryerson New Media”, in an effort to create a pool of works made at the school.

Text

Your web page should include two descriptions.

1) Project description – from a conceptual perspective, this text is idea driven and allows you to give voice to what drove you to make the work.

2) Technical description — addresses the inner workings, reveals details about relationships that might not be obvious by simply watching the piece in action. This description should include size, materials, space requirements, platforms.

Video

A 2 – 3 min video that tells the story of experiencing the work. This NOT a rock video, it is not cut to beat, it does not have a sound track UNLESS the piece has sound (see also voice over below). You want high quality images that show the audience what the piece is about. You should use a tripod and when necessary additional lights. Shoot LONG takes – you want people to understand the work.

As this is a story – you should shoot it like a narrative.

A minimal shot list includes:

- Title & Credits

You should make sure you have a shot at the beginning with your project’s title, and a shot with credits at the end of the video (your name, date, school, etc)

- Establishing shot:

– start wide, let the whole experience be visible in the frame. Let us look at the elements.

- Medium shot:

– if this work is interactive, then have an “actor” come into the work and interact. Have them do what they are supposed to do. You may to choose to start this shot with someone in the frame. Make sure we understand this in relations to the first shot. Don’t go all Tarantino on your audience.

– if the work is autonomous then move in and let the piece “perform”

– you may want more than one angle of this action

- Close Up shots:

– shoot close-ups of interactions – are there handheld interfaces? Is there a kiosk, an object? Get a close up of it in use and on its own.

- Medium Shot:

– End with a medium shot – once we have seen what the layers are let us see the whole thing again. It allows us to integrate the information.

Technical Shots:

Include technical shots only as they are necessary for understanding the work.

If the technology is integral to the piece – as in an aesthetic or functional element then include them with the above video. If the piece is trying to conceal the technology then it may be better to have a technical video separate from the experience document.

This layer is usually more valuable for juries and organizations that want to see aspects of process. This is a judgment call – there are not hard and fast rules. Keep in mind that some people are deeply turned off by inner working videos. Giving an elegant pathway around this layer is wise.

Voice Over OR Title Slides

The work will often determine which is the correct approach. This is partly a judgment call – partly a functional issue. It is often more efficient to describe in spoken word. Text will force you to be more concise. Voice over with a sound piece really doesn’t work (I speak from experience here).

If you do opt for voice over – make a copy with only the sound inherent to the piece and a copy with your voice over. You may wish at some point to show the video without your dialog.

USE someone other than yourself to do the voice – IF at all possible write a script and have someone else read it. You will be grateful you did this in 5 years (months, weeks).

Stills

You should have high quality stills for print media (catalog, advert, the newspaper). These stills should be long shots (wide, establishing), medium shots and close ups. These are tripod held, lit shots, NOT frame grabs from your video – even if your video is HD – they are not frame grabs.

Xbee – configuring the radios

Once your Xbee is wired up, you need to configure it for your application. For basic use we are only interested in 3 settings in the radios. Advanced users will want to look at online docs / manuals to get a sense of what else is possible.

Xbee — Making Connections

Xbee radios can be connected in countless ways. The limits are imagination and only to small degree budget.

This post is an overview of some of the strategies for making electrical connections with equipment in our labs. The start simple and become more complex.

!!!!REMEMBER XBEES ARE 3.3V DEVICES!!!

Cindy Poremba — New Media Kodak Lecture

Thursday Night is Cindy Poremba’s Kodak Lecture. Cindy is a New Media Maker and theorist, her work will be or interest to many of you!

Full release: cindyporemba_pressrelease